The function may also incorporate the removal or "stopping" of certain tokens. The first option will be the fastest and the third option being the slowest. Second, the columns of our DTM may be sorted by (1) the order they appear in the corpus, (2) alphabetic order, or (3) by their frequency in the corpus. Since all weightings require raw counts anyway, we will just stop at a count DTM (not to mention relative frequencies will turn an integer matrix into a real number matrix, which will result in a larger object in terms of memory). The most common is to normalize by the row count to get relative frequencies. Some functions may include the option to weight the matrix. First, the most basic DTM uses the raw counts of each word in a document. While the above are essential, there are a few optional steps which functions may or may not take by default. Finally, we will count each time we find a match between a token in a document with a token in the vocabulary. Second, from these lists of tokens, we need to extract only the unique tokens to create a vocabulary. There are three necessary steps: (1) tokenize, (2) create vocabulary, and (3) match and count.įirst, each document is split into list of individual tokens. To get started, let's create two base R methods for creating dense DTMs. Let's do a tiny bit of preprocessing (lowercasing, smooshing contractions, removing punctuation, numbers, and getting rid of extra spaces).Ĭlean_text = gsub("", "", clean_text),Ĭlean_text = gsub("]", " ", clean_text),Ĭlean_text = gsub(']+', " ", clean_text),Ĭlean_text = gsub("]+", " ", clean_text), Summarize(text = paste0(line, collapse = " "),įilter(series = "TNG") |> # limit to TNG

CLEAN TEXT TM R DATA FRAME CORPUS FREE

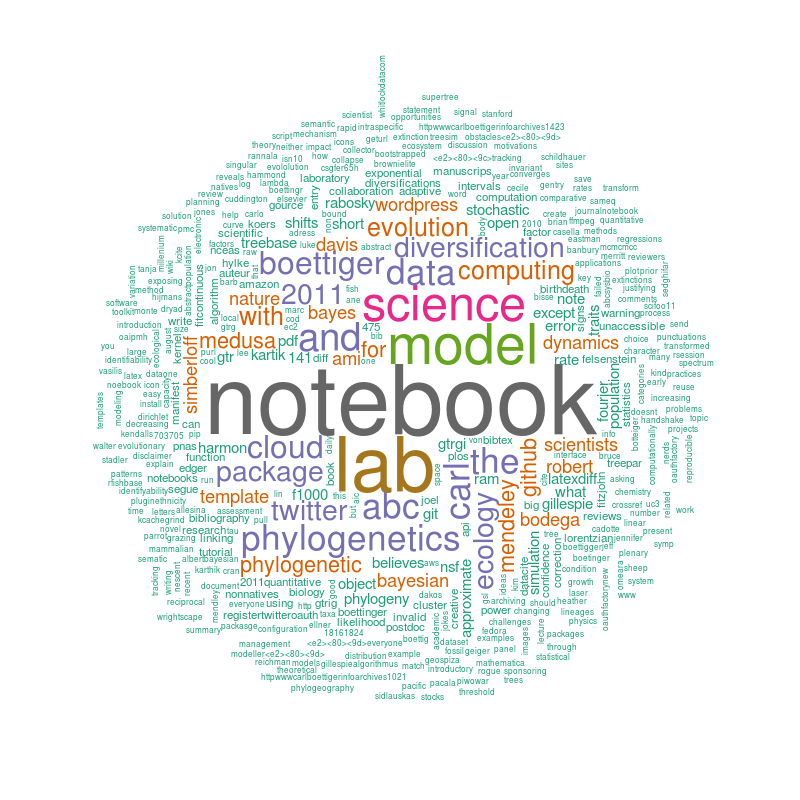

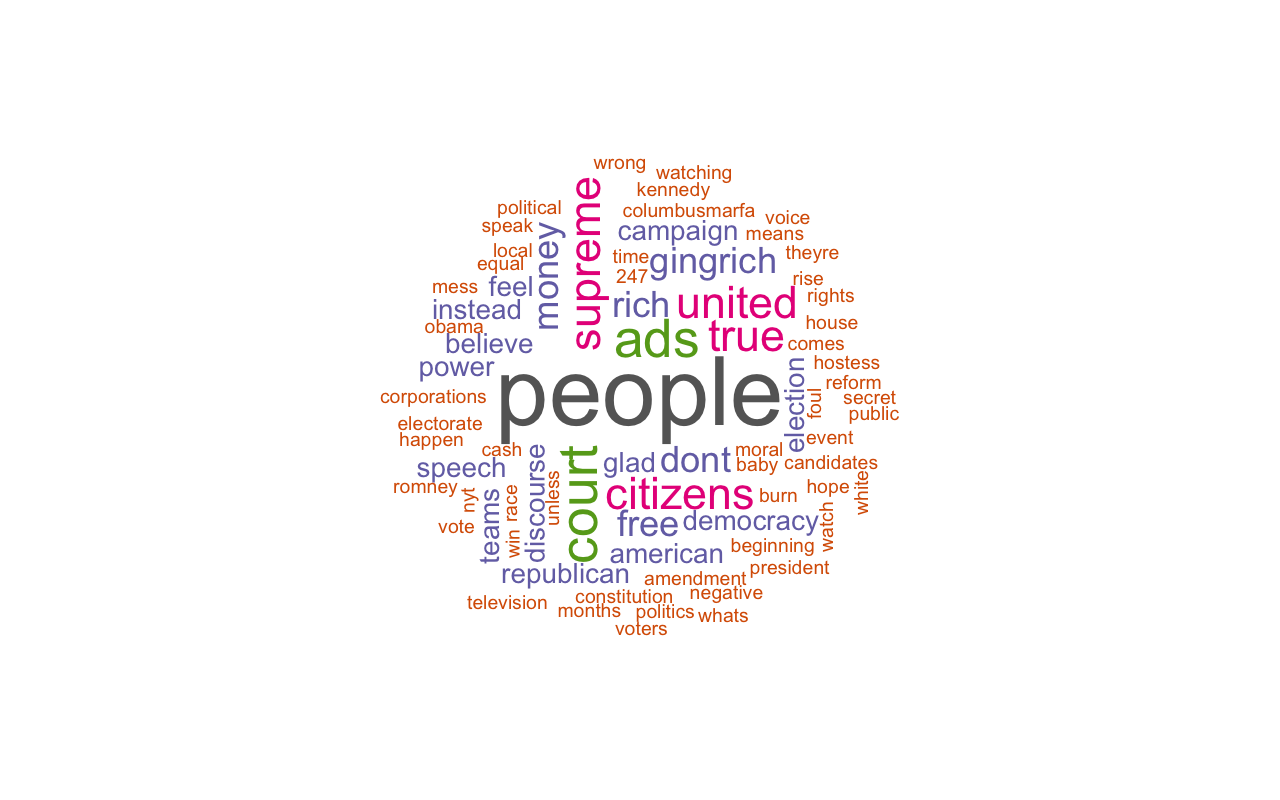

Feel free to let me know if I've missed a package that creates a DTM. These include every single package that includes functions to create DTM that I could find (the koRpus package does provide a document-term matrix method, but I could not get it to work). (The dtm_builder() function was developed in tandem with writing the original comparison back in December 2020).īelow are the non-text analysis packages we'll be using.Īnd, here are the text analysis R packages we'll be using. One of these is an R package, text2map, that I developed with Marshall Taylor. The rest are from eleven text analysis packages. Two are custom functions written in base R. The cells of the matrix are typically a count of how many times each unique word occurs in a given document (often called tokens).īelow, I attempt a comprehensive overview and comparison of 15 different methods for creating a DTM. What is a DTM? It is a matrix with rows and columns, where each document in some sample of texts (called a corpus) are the rows and the columns are all the unique words (often called types or vocabulary) in the corpus. The Document-Term Matrix (DTM) is the foundation of computational text analysis, and as a result there are several R packages that provide a means to build one. Scores.df = ame(score=scores, text=sentences)ĭata <- read.csv("location", stringsAsFactors=FALSE) # and conveniently enough, TRUE/FALSE will be treated as 1/0 by sum(): # match() returns the position of the matched term or NA

# compare our words to the dictionaries of positive & negative terms # clean up sentences with R's regex-driven global substitute, gsub(): Scores = laply(sentences, function(sentence, pos.words) I want to remove all stop words, "the", "you", "like" "for" so on.

CLEAN TEXT TM R DATA FRAME CORPUS CODE

I have written a code to clean the data but it's not cleaning. The text data column contains huge sentences. I have a data frame having more than 100 columns and 1 million rows.